SCADA cyber security failures rarely come from one exotic zero-day. In critical infrastructure environments, the highest-impact incidents are often enabled by familiar weaknesses: flat networks, unmanaged remote access, legacy protocols, and gaps between what teams think is deployed and what is actually reachable from an attacker’s path.

This article breaks down the most common SCADA security vulnerabilities seen in industrial networks, why they persist, and how to validate them in a way that does not touch production systems. For additional background on controls and program building, see Frenos’ Complete Guide to OT Security: Protecting Industrial Control Systems and the overview on SCADA Security. If you are evaluating testing approaches, the guide on OT Penetration Testing for ICS & SCADA is a useful companion.

What SCADA cyber security means in practice (concise definition)

SCADA cyber security is the set of technical controls and operational practices used to protect supervisory control and data acquisition systems and their connected industrial control system components, including HMIs, engineering workstations, historians, PLCs/RTUs, communications infrastructure, and the safety and business processes that depend on them.

Unlike typical IT environments, SCADA security outcomes must be measured against availability and process integrity first. A “successful” security change that causes nuisance trips, latency, packet loss, or unexpected device behavior is still a failure. That constraint shapes everything: monitoring, segmentation, patching, and especially validation testing.

Why SCADA environments stay vulnerable

Most operators are managing a mix of modern and legacy assets under constraints that are rational for operations but difficult for security:

- Long lifecycle systems: PLCs and RTUs can remain deployed for decades. Vendor support windows do not match operational reality.

- Fragile protocols and devices: Many field protocols were designed for reliability, not adversarial conditions. Some devices react poorly to scanning or malformed traffic.

- Split ownership: Engineering, operations, and IT security may each control part of the stack. Gaps form between the network, endpoints, and the control logic.

- Remote connectivity: Vendors and internal teams need access for maintenance. If remote access is not engineered with strong identity, segmentation, and monitoring, it becomes an attacker’s easiest door.

- Limited testing tolerance: Many teams avoid deep testing because they cannot risk disrupting production. This is where safe validation approaches, including digital twins, become critical.

The most common SCADA security vulnerabilities in industrial networks

Below are the vulnerabilities that most frequently create real attack paths to high-impact OT assets. These are written from the perspective of what a red team or adversary can chain together, not just what a vulnerability scanner can list.

-

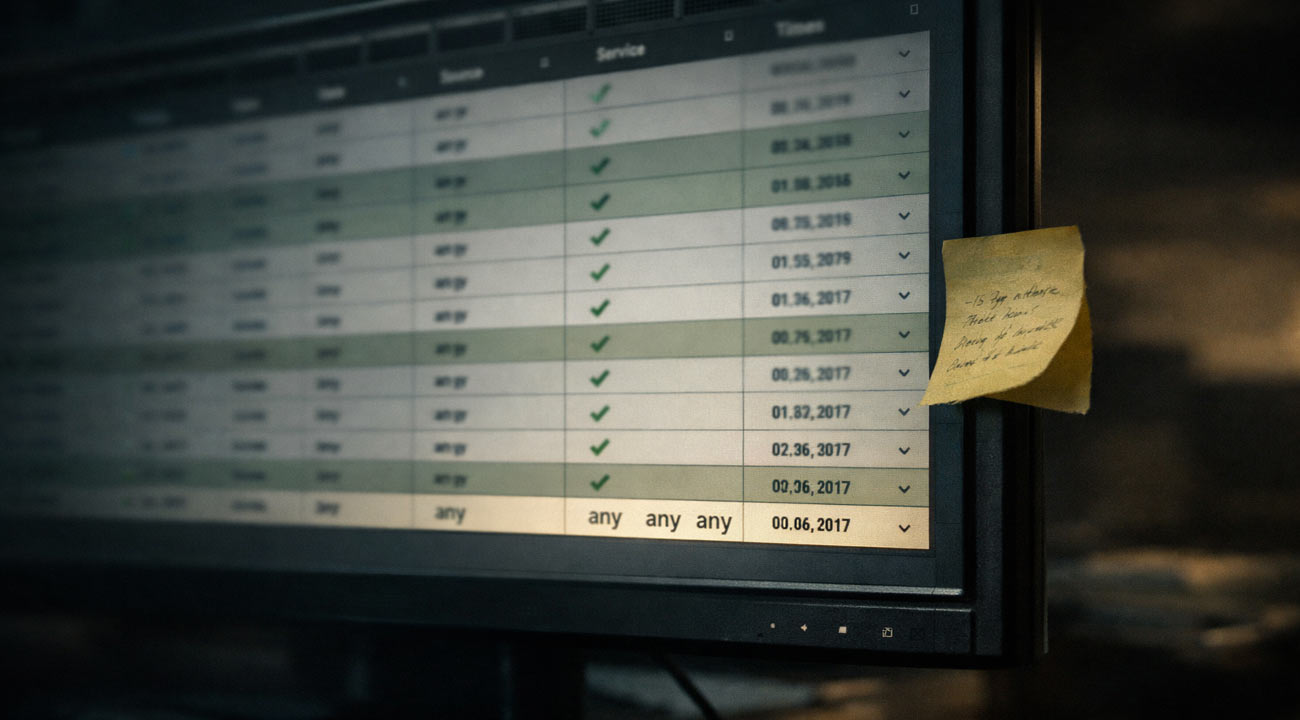

Flat or porous network segmentation

A common finding is a SCADA network that is logically separated on paper but reachable in practice through shared services, dual-homed hosts, permissive firewall rules, or poorly controlled jump paths. Symptoms include: broad “any-any” rules between zones, shared management VLANs, or engineering workstations that can route to both IT and OT.

Why it matters: segmentation failures turn a single compromised Windows endpoint into a lateral movement platform to HMIs, historians, and engineering tools.

What to validate: actual reachability and allowed protocols across zones, not just diagrams. Validate whether OT critical assets are reachable from IT, vendor remote access segments, or wireless networks.

-

Insecure remote access and vendor pathways

Remote access is necessary, but it is often built for convenience: shared accounts, weak MFA enforcement, VPN split tunneling, persistent sessions, or vendor tools installed on critical hosts.

Why it matters: remote access is frequently the highest-probability initial access vector.

What to validate: authentication strength, session controls, device posture checks, approval workflows, logging completeness, and whether remote access terminates into a tightly controlled jump environment.

-

Weak identity and privilege on engineering and operator stations

Engineering workstations and HMIs often accumulate tools, drivers, and local admin privileges. Shared credentials, cached passwords, and service accounts with broad rights are common.

Why it matters: if an attacker reaches an engineering workstation, they may be one step away from logic upload/download capability, firmware changes, or modification of setpoints through engineering software.

What to validate: least privilege on engineering tools, credential hygiene, local admin sprawl, and whether privileged actions are logged and reviewed.

-

Legacy and unauthenticated industrial protocols

Many industrial protocols lack authentication, integrity protection, or encryption. Even when a vendor offers secure modes, they are not always enabled due to compatibility constraints.

Why it matters: if an attacker can position on the network, they may be able to read or write process values, issue control commands, or interfere with communications.

What to validate: where cleartext industrial protocols traverse, whether secure variants exist, and whether compensating controls like segmentation, allowlisting, and protocol-aware inspection are present.

-

Patch and vulnerability management gaps that are understandable but exploitable

OT environments cannot patch like IT. Maintenance windows are limited, validation is slow, and some patches risk breaking deterministic behavior.

Why it matters: unpatched Windows systems, exposed services, and known device vulnerabilities can provide predictable footholds.

What to validate: a risk-based vulnerability management approach that prioritizes exploitable attack paths to critical functions. This ties directly to an OT Risk Assessment rather than a generic CVSS list.

-

Poor asset inventory and “unknown” exposures

Teams often lack a complete picture of what is connected, which firmware versions exist, and what services are exposed. Shadow engineering laptops, temporary vendor gear, and forgotten test networks show up repeatedly.

Why it matters: you cannot defend what you cannot see, and you cannot segment precisely without knowing required communications.

What to validate: passive discovery coverage, reconciliation against procurement and maintenance records, and identification of unauthorized paths between zones.

-

Monitoring blind spots and alert fatigue

Many environments deploy some form of SCADA security monitoring or IDS, yet critical events are not actionable due to baseline gaps, missing context, or poor tuning.

Why it matters: detection that cannot distinguish normal from abnormal does not reduce dwell time.

What to validate: coverage of key segments, fidelity of alerts for lateral movement and remote access misuse, and correlation with endpoint telemetry on Windows-based OT systems.

-

Backup, recovery, and configuration integrity weaknesses

Backups exist, but recovery is not tested, offline copies are missing, or control logic backups are incomplete. Configuration drift between “golden configs” and actual running configs is common.

Why it matters: ransomware or destructive attacks often target recovery paths and engineering repositories. Process integrity incidents can persist if you cannot restore known-good states.

What to validate: restore time objectives in OT terms, ability to rebuild engineering workstations, and integrity controls for PLC logic and critical configuration.

A practical framework for SCADA vulnerability assessment and penetration testing

For most operators, the goal is not to run a loud test. The goal is to answer specific questions: Can an attacker reach control assets? What is the most credible chain from initial access to process impact? Which controls break that chain with minimal operational risk?

Use this framework to structure SCADA vulnerability assessment and SCADA penetration testing in a way that aligns with how attacks actually unfold.

Step 1: Define the crown jewels and impact scenarios

Identify high-impact outcomes, such as loss of view, loss of control, unsafe state, production outage, environmental release, or quality deviation. Map these to specific assets and functions: HMIs, engineering workstations, historians, domain services used in OT, remote terminal units, and safety-related interfaces.

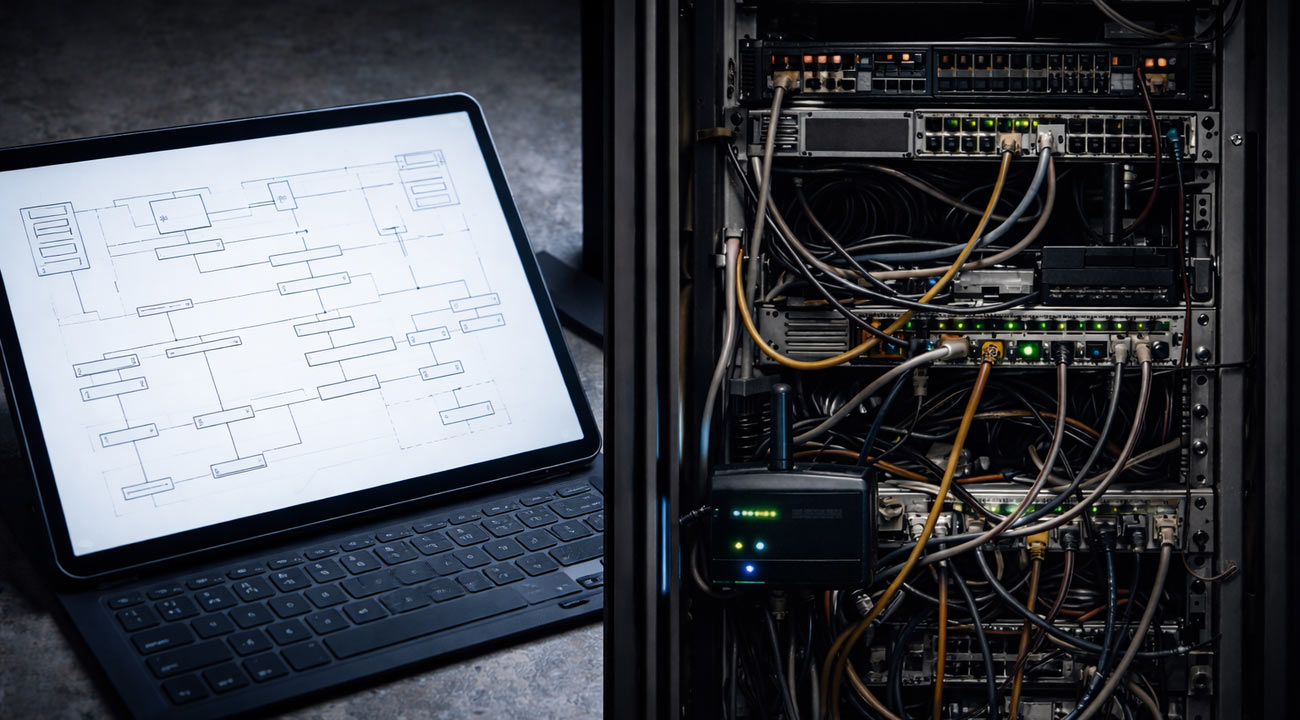

Step 2: Build an accurate communication map

Document and validate required flows: who talks to whom, on what ports and protocols, at what times. This is the foundation for SCADA network segmentation changes and for tuning SCADA intrusion detection.

Step 3: Enumerate identities and trust relationships

List service accounts, shared credentials, local admin groups, remote access identities, and vendor accounts. Pay special attention to where a single credential provides broad reach.

Step 4: Identify attack paths, not just vulnerabilities

A vulnerability is only urgent if it is reachable and useful to an attacker. Model realistic attacker movement: initial access, pivoting, privilege escalation, and interaction with OT protocols.

Step 5: Validate safely, then remediate and re-validate

Validation should confirm what is exploitable without risking operations. Remediation should focus on breaking the path with least disruption: segmentation rules, privileged access controls, hardening, and monitoring improvements. Then re-test to ensure the path is truly closed.

This is where many programs stall: traditional approaches either avoid deep validation or require intrusive testing windows. Frenos focuses on enabling full-scope validation without touching production systems.

How to test SCADA cyber security without disrupting production: digital twin based validation

A core objection to deep SCADA testing is valid: active scanning and exploit attempts can crash fragile devices, overload links, or create unpredictable behavior. That does not mean you should accept uncertainty.

Frenos’ approach is to use a digital twin of the industrial environment so security teams can safely simulate real cyberattacks and uncover vulnerabilities across the full control system without risking operational disruption. Instead of testing on production, you validate attack paths and control effectiveness in a representative environment.

What this enables in practice:

- Full-scope testing with near-zero assessment downtime (when the assessment is performed against the twin)

You can validate segmentation, identity controls, and exploitability of reachable services without requiring production outages.

- Higher confidence in attack path analysis

Rather than stopping at “this port is open,” you can validate whether an attacker can chain misconfigurations and weaknesses to reach the highest-risk assets.

- Repeatable security validation

After remediation, you can re-run scenarios to confirm changes did what you expected. This moves teams toward continuous security validation, not one-time reporting.

- Safer evaluation of detection and response

You can test SCADA security monitoring and SCADA intrusion detection by generating controlled adversary behaviors and observing whether alerts fire and whether triage data is sufficient.

If you are considering digital twin readiness, this roadmap for OT Digital Twins provides a practical view of what data sources are typically used and how to phase implementation.

What you should expect as deliverables (and how to make them actionable)

Whether you use a digital twin approach or a more traditional engagement, insist on outputs that help operations teams fix real risk.

Actionable deliverables should include:

- Attack path maps to highest-risk assets: a clear chain from initial access to impact, including required privileges, reachable segments, and key chokepoints.

- Evidence-backed findings: proof of reachability and exploitability where relevant, tied to environment context.

- Prioritized remediation plan: steps that reduce risk with minimal operational disruption, including segmentation rule changes, privileged access controls, hardening recommendations, and monitoring tuning.

- Validation guidance: how to confirm fixes worked, and which scenarios to re-test.

- Stakeholder-specific summaries: an engineering-friendly view (what to change and why) and an executive view (risk and priority), without diluting technical accuracy.

If you are evaluating approaches, compare how each method handles safety, scope, and re-validation. Traditional tests can be valuable, but they often face limits in production. Digital twin based testing is designed to reduce those limits while keeping outcomes grounded in realistic attack behavior.

FAQs

Will SCADA cyber security testing disrupt production?

It can if testing is performed directly on production systems with active scanning or exploit attempts, especially in fragile or bandwidth-constrained segments. A safer option is to validate attack paths in a digital twin of the industrial environment, allowing full-scope OT and SCADA security testing without touching production systems.

Is a digital twin based approach better than a traditional SCADA penetration test?

They solve different constraints. Traditional testing can be effective when you have well-defined windows and stable targets, but it is often limited by operational risk and access restrictions. A digital twin approach prioritizes safety and breadth, enabling deeper validation of segmentation, identity, and protocol abuse scenarios without putting operations at risk, and it supports repeatable re-testing after fixes.

How long does a SCADA vulnerability assessment take?

Timing depends on environment size, data availability, and the scope of attack paths being validated. The practical way to estimate is to break it into phases: discovery and data collection, modeling and attack path analysis, validation testing, and remediation planning. Digital twin based testing can reduce the need for production downtime, which often shortens scheduling delays even if technical work is similar.

What do we get at the end of an OT and SCADA security assessment?

You should expect prioritized findings tied to credible attack paths, evidence showing reachability and exploitability where applicable, and a remediation plan that focuses on the highest-risk assets. The most useful outputs include attack path maps, segmentation and remote access improvement recommendations, and a re-validation plan to confirm fixes.

Do we need perfect data to build a digital twin, and are we mature enough?

Perfect data is not required. Most environments start with a practical baseline: network topology and flows, asset inventory sources, and configuration information for key segments. The twin can be built iteratively, focusing first on the zones and assets that drive the highest impact scenarios, then expanding coverage over time.

Call to Action

If you want to identify the most likely attack paths into your SCADA environment and validate them safely, request an OT Security Assessment with Frenos. You will get evidence-backed findings mapped to high-risk assets and a remediation plan that reduces risk without putting production at risk.

.jpg)